The Quiet Promotion: Why Agentic AI Moves Your Role Upstream

The most frequent question I hear when I run sessions on agentic AI is some version of the same thing. "What does my role look like when this is...

If you work in government or a regulated industry, there is one sentence worth pinning above your desk before your organization deploys its first agentic AI system. Human-in-the-loop is not a feature you add to an autonomous system. It is the product.

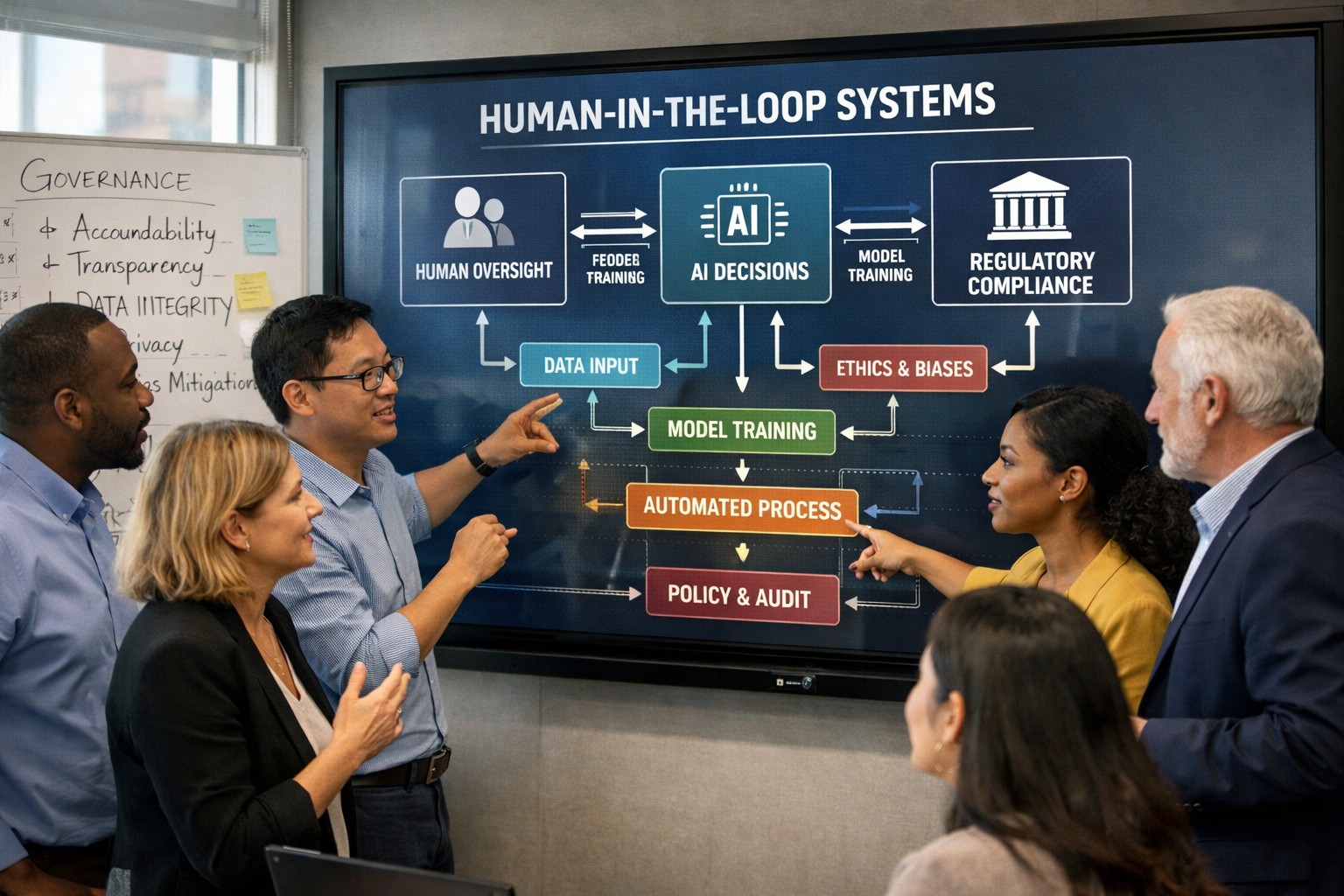

The phrase "human-in-the-loop" originated in machine learning, where supervised training requires a human to review and correct model outputs. In the agentic era it has taken on a broader meaning. It describes any architectural pattern that keeps a human involved in the critical decision points of an autonomous system. For organizations where mistakes have regulatory, legal, or public-trust consequences, these patterns are not optional enhancements. They are prerequisites.

The case for human oversight starts with a technical reality that many executives still underestimate. Large language models are non-deterministic. The same prompt can produce different outputs on different runs. When an LLM is wrapped in an agentic loop — planning, calling tools, acting on the world — that non-determinism compounds. An agent can take a different path through a task on Tuesday than it took on Monday, and both paths might look reasonable in isolation.

In a consumer context, this is tolerable. If an AI assistant suggests a slightly different restaurant today than yesterday, nobody is harmed. In a government context, the same unpredictability becomes a problem. If an agent processes benefits applications differently on different days, that is an equal protection issue. If it classifies procurement responses inconsistently, that is a fairness issue. If it logs different actions on different runs, that is an audit issue.

The MIT Sloan researchers studying agentic AI in clinical settings found that eighty percent of the work in deploying an agent was not technical work. It was the unglamorous work of data engineering, stakeholder alignment, governance, and workflow integration. That finding generalizes. In regulated contexts, the agent is the easy part. The guardrails are the hard part, and they are where the real investment has to go.

Government and regulated industries operate under constraints that commercial enterprises do not. Four factors make the human-in-the-loop question fundamentally different for public sector work.

Public trust is the product. A private company that deploys a flawed agent can apologize, compensate affected customers, and move on. A government agency that deploys a flawed agent damages the trust citizens place in their institutions. That damage is expensive to repair and sometimes irreversible.

Regulatory requirements are non-negotiable. Agents operate within the same legal framework as human employees. HIPAA, FERPA, the Privacy Act, and industry-specific regulations do not relax because the decision was made by software. If anything, they are interpreted more strictly.

Decisions affect real citizens with limited recourse. A citizen who is denied a benefit, flagged as non-compliant, or routed through an automated process has few options if the decision was wrong. Due process requires explainability, and explainability requires the kind of audit trails that only come from deliberate governance.

Transparency is mandatory. FOIA requests, inspector general audits, legislative oversight, and public records laws all require that government agencies be able to explain their decisions after the fact. Agents that cannot produce reconstructible decision trails are agents that cannot survive a public records request.

A well-governed agentic system implements guardrails at multiple layers, not just one. The five that matter most in regulated contexts.

Approval gates. Human sign-off is required before the agent takes any high-impact action. Sending external communications. Modifying records of record. Committing financial transactions. Publishing content to the public. The threshold for what constitutes high-impact should be set conservatively and revisited as organizational confidence grows.

Audit trails. Every agent decision is logged in a form that can be inspected after the fact. Every perception, every reasoning step, every tool call, every output. This is not just nice to have. It is how you respond to a FOIA request six months later, how you explain a decision to an administrative law judge, and how you identify patterns of drift before they become systemic issues. Observability platforms purpose-built for LLM-based systems are emerging, and they are worth the investment.

Scope boundaries. The agent is explicitly told what it can and cannot do. Which tools it can call. Which data sources it can access. Which topics are out of scope. These boundaries are enforced at multiple layers — in the system prompt, in the permissions granted to the underlying service account, and in the monitoring that flags out-of-scope attempts. Defense in depth is the principle.

Escalation paths. Clear rules define when the agent must stop and hand off to a human. Low confidence in an output. Unexpected errors. Actions the agent recognizes as outside its scope. Tasks involving sensitive populations. The escalation should be automatic, not optional, and the human on the receiving end should have full context about why the agent stopped.

Bias and quality monitoring. Continuous evaluation of agent outputs for fairness, accuracy, and drift. This is an ongoing operational function, not a pre-launch checklist. A system that was fair at launch can drift over time as the underlying model is updated, as training data shifts, or as the population of cases changes. Monitoring is how you catch that drift before it produces a disparate impact finding.

The prudent path for any government agency new to agentic AI is to deploy internally before deploying externally. Agents that analyze internal policy documents, triage internal tickets, support internal research, or summarize internal meetings generate operational value without exposing citizens to the risks of an autonomous system making mistakes in public.

The internal track also generates the operational knowledge your agency needs to govern external deployments responsibly when the time comes. You learn what your audit trails actually look like. You discover which edge cases your scope boundaries missed. You refine your escalation thresholds based on real data. By the time you are ready to deploy something citizen-facing, your governance muscle is built.

Agentic AI will bring real value to government work. Requirements analysis. Procurement review. IT service management. Policy research. Internal reporting. All are legitimate and high-value applications. But the value is only realized when the governance comes first. Human-in-the-loop is not a feature you bolt onto a production system. It is the product that makes the production system responsible in the first place.

Interested in our courses, webinars, or corporate training solutions?

Send us a message and a member of our team will get back to you shortly.

The most frequent question I hear when I run sessions on agentic AI is some version of the same thing. "What does my role look like when this is...

There is a new acronym you are going to hear constantly over the next eighteen months. It is MCP, and it stands for Model Context Protocol. If you...

If you are setting AI strategy for your organization in 2026, there is a piece of research you should read before you sign off on your next...